by Sandra McLean,York University

Credit: Unsplash/CC0 Public Domain

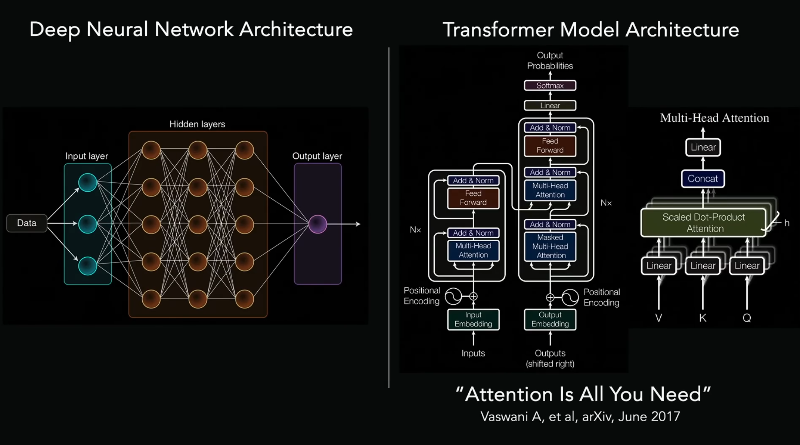

A new study by York University researchers has found a potential striking flaw in artificial intelligence (AI) models. Artificial neural networks (ANNs), a type of AI model built to solve vision tasks for computers, have surprisingly emerged as thecurrent best understandingof how our own brain's visual system works, in the last decade. But does current AI really work like a primate brain?

"Artificial intelligence systems are often described as 'brain-like' because they can predict activity in parts of the brain that help us recognize objects," says York University Assistant Professor Kohitij Kar, senior author of a new study. "Until now, scientists mostly tested this in one direction. They asked whether AI models can predict brain activity."

In this study, the researchers flipped the question—if AI truly mirrors the brain, shouldn't brain activity also be able to predict what's happening inside the AI model?—and developed a reverse predictivity test to find the answer. The findings arepublishedin the journalNature Machine Intelligence.

"Ultimately, we need computational models to truly understand the underlying neural mechanisms of how we recognize objects. How do we see objects move? While it's a very easy task that we do every day, computationally, though, it's a very challenging problem," says Kar, the Canada Research Chair in Visual Neuroscience and a member of York's Center for Vision Research and Center for Integrative and Applied Neuroscience.

Similar predictive performance, different underlying strategies across models. Credit:Nature Machine Intelligence(2026). DOI: 10.1038/s42256-026-01204-0

The researchers, including York Postdoctoral Fellow Sabine Muzellec, a Connected Minds trainee, used 1,320 natural or naturalistic synthetic images of a bear, an elephant, a face, an apple, a car, a dog, a chair, a plane, a bird and a zebra, placed against natural, indoor or outdoor background scenes.

They also used an additional 300 images of the same objects rendered differently, such as outlines, drawings, schematized forms and artistic variations.

Striking gaps between brain and models

"The results were striking. While AI models can predict the neurons we recorded in the brain fairly well, the brain cannot equally predict many of the model's internal features. Interestingly, this is not the case when neurons from one brain is compared against ones from another brain," says Kar.

The problem with the ANNs solving vision differently is that this difference between primate brains and models will widen and compound over time if not corrected now. The direction of prediction was always to have the model predict like neurons, but if the reverse is not true, then these models don't really serve as good hypotheses for the brain, adds Kar.

"The findings suggest that today's AI systems solvevisual taskspartly using internal strategies that the brain may not use. Importantly, the parts of AI models that align with the brain are also better at predicting real human behavior," says Kar.

Implications for research and real-world use

AI models are increasingly used to help design experiments to understand human behavior, including in clinical settings. It is assumed that the AI model sees the world similarly to how a human brain does.

"Our findings challenge how similar current AI systems really are to the primate brain. We show that models that were previously thought to be brain-like rely on internal components that the brain does not appear to use. We provide a well vetted diagnostic metric for the field," says Muzellec.

If AI models can become more brain-like, they could in the future help people with everything from post-traumatic stress disorder to autism, but for now, their use in experiments and to understand human behavior is fraught. Similar models are also being used now for auditory systems, language systems and motor systems, but again, if they aren't working as expected that's an issue.

"Our approach helps identify which parts of an ANN truly match brain activity, allowing us to build more reliable models for understanding how people see and interpret the world," says Kar. "This is especially important for our autism research program, which builds on models of the neurotypical brain as a baseline."

The study's authors have also made a testing toolkit available for AI developers to use to both test and improve their models going forward.

The study introduces a new standard for building AI that is not just powerful—but truly brain-aligned.

Publication details Sabine Muzellec et al, Reverse predictivity for bidirectional comparison of neural networks and biological brains, Nature Machine Intelligence (2026). DOI: 10.1038/s42256-026-01204-0 Journal information: Nature Machine Intelligence

Post comments